Dell PowerEdge FX2: VSAN Disk Configuration Steps

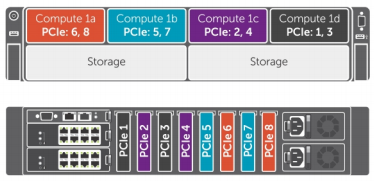

When you get your new DELL FX2s out of the box and powered on for the first time you will notice that the disk configuration has not been setup with VSAN in mind…If you where to log into ESXi on the blades in SLOT1a and 1c you would see that each host will have each SAS disk configured as a datastore. There is a little pre-configuration you need to do in order to get the drives presented correctly to the blades servers as well as remove and reconfigure the datastores and disks from within ESXi. !(/images/2015/09/fx2_qtrsrg-300x145.png)With my build I had four FC430 Blades with two FD332 Storage Sleds that contained 4x200GB SSDs and 8x600GB SAS drives in each sled. By default the storage mode is configured in Split Single Host mode which results in all the disks being assigned to the hosts in SLOT1a and SLOT1c and both controllers as also assigned to the single host.

You can configure individual storage sleds containing two RAID controllers to operate in the following modes:

- Split-single - Two RAID controllers are mapped to a single compute sled. Both the controllers are enabled and each controller is connected to eight disk drives

- Split-dual - Both RAID controllers in a storage sled are connected to two compute sleds.

- Joined - The RAID controllers are mapped to a single compute sled. However, only one controller is enabled and all the disk drives are connected to it.

To take advantage of the FD332-PERC (Dual ROC) controller you need to configure Split-Dual mode. All hosts need to be powered off to change the default configuration and change it to Split Dual Hosts for the VSAN configuration. Head to Server Overview -> Power and from here Gracefully Shutdown all four servers !(/images/2015/11/fx2_3.png)

Once the servers have been powered down, click on the Storage Sleds in SLOT-03 and SLOT-04 and go to the Setup Tab. Change the Storage Mode to Split Dual Host and Click Apply. !(/images/2015/11/fx2_2.png) To check the distribution of the disks you can Launch the iDRAC to each blade and go to Storage -> Enclosures and check to see that each Blade now has 2xSSDs and 4xHDD drives assigned. With the FD332 there are 16 total slots with 0-7 belonging to the first blade and 8-16 belonging to the seconds blade. As shown below we are looking at the config of SLOT1a. !(/images/2015/11/fx2_5.png) The next step is to reconfigure the disks within ESXi to make sure VSAN can claim them when configuring the Disk Groups. Part of the process below is to delete any datastores that exist and clear the partition table…by far the easiest way to achieve this is via the new Embedded Host Client. Install the Embedded Host Client on each Host

esxcli software vib install -v <a class="external-link" href="http://download3.vmware.com/software/vmw-tools/esxui/esxui-signed.vib" rel="nofollow">http://download3.vmware.com/software/vmw-tools/esxui/esxui-signed.vib</a>

Installation Result

Message: Operation finished successfully.

Reboot Required: false

VIBs Installed: VMware_bootbank_esx-ui_0.0.2-0.1.3172496

VIBs Removed:

VIBs Skipped:Log into the Hosts via the Embedded Client from (https://host_ip/ui) and go to the Storage Menu and delete any datastores that where preconfigured by DELL. !(/images/2015/11/fx2_vsan_prep1.png) Click on Devices Tab in the Storage Menu and Clear the Partition Table so the VSAN can claim the disks that have been just deleted. !(/images/2015/11/fx2_vsan_prep2.png) From here all disks should be available to be claimed by VSAN to create your disk groups. !(/images/2015/11/fx2_vsan_prep3.png) As a side note it’s (http://anthonyspiteri.net/vsan-dell-perc-important-driver-and-firmware-updates/) for the PERC. References: http://www.dell.com/support/manuals/au/en/aubsd1/dell-cmc-v1.20-fx2/CMCFX2FX2s12UG-v1/Notes-cautions-and-warnings?guid=GUID-5B8DE7B7-879F-45A4-88E0-732155904029&lang=en-us

7 Commentsarchived