NSX Bytes - Controller Deployment Gotchyas

There are a lot of great posts already out there in regards to install and configuration of NSX. Rather than reinvent the wheel I’ve decided to do a series of NSX Bytes relating to a couple of gotchyas I’ve come across during the config stage. This post will focus on the NSX Controller deployment which provides a control plane to distribute network information to hosts in your VXLAN Transport zone.

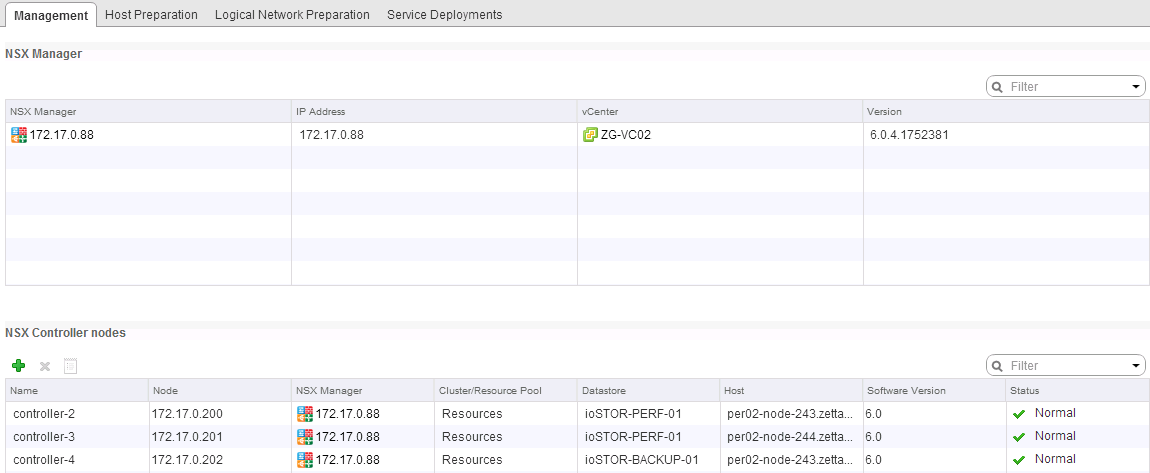

To get up to this part you would have had to deploy the NSX Management VM and prepare your management network which you specify in the IP Pool setup of the deployment. It’s suggested that you deploy three NSX Controllers for HA and resiliency. If successful you should see the Management Tab in the Networking and Security Section of the vCenter Web Client looking like this:

In my first attempt I managed to successfully deploy all three controllers without issue, however in my second Lab I ran into a couple of issue that initially had me scratching my head. It must be noted that there isn’t much, if any error feedback provided to you via the vCenter Client. To get more detail I enabled SSH on the NSX Manager GUI and tailed the manager log by running the command

In my first attempt I managed to successfully deploy all three controllers without issue, however in my second Lab I ran into a couple of issue that initially had me scratching my head. It must be noted that there isn’t much, if any error feedback provided to you via the vCenter Client. To get more detail I enabled SSH on the NSX Manager GUI and tailed the manager log by running the command

NSX-01#show manager log followThe output is verbose but useful and I’d encourage familiarity with them. There is a Syslog setting than can send the logs out to an external monitoring system as well if you wish.

ISSUE 1: No Host is Compatible with the Virtual Machine

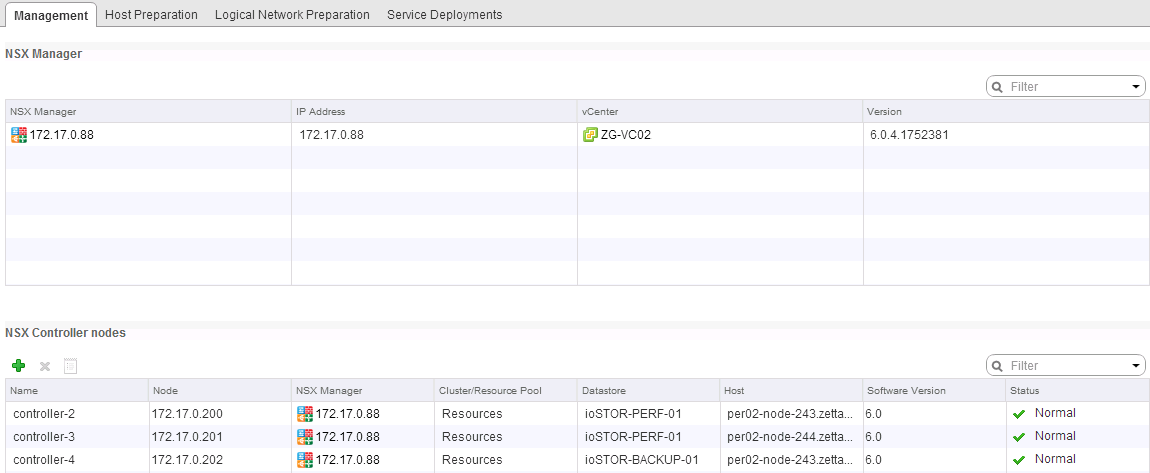

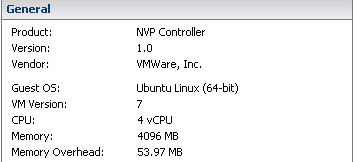

After setting off a new Controller Build you see vCenter Deploy the OVF Template, Reconfigure the VM and then Power off and Delete the VM.

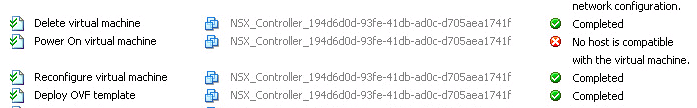

This was due to the fact that the resource requirements for the Controller Template where not able to be met…specifically the vCPU Count. The ESXi Hosts in my lab where only capable of running VMs with 2vCPUs (1 Socket, 2 Cores) and because of that the deployment failed.

This was due to the fact that the resource requirements for the Controller Template where not able to be met…specifically the vCPU Count. The ESXi Hosts in my lab where only capable of running VMs with 2vCPUs (1 Socket, 2 Cores) and because of that the deployment failed.

Key there is to ensure that the spec can be reached as shown above. This issue should be restricted to Lab Hosts, but none the less it’s one to look out for just in case.

ISSUE 2: Controller VM Appears to deploys successfully then gets deleted

After setting off a new Controller Build you see the OVF Template Deploy and start up. After about 10 minutes, If you launch the VM console you will see that the Controller is being configured with the right IP Pool settings and reboots in a ready state. Without warning the VM is shutdown and deleted.

Key there is to ensure that the spec can be reached as shown above. This issue should be restricted to Lab Hosts, but none the less it’s one to look out for just in case.

ISSUE 2: Controller VM Appears to deploys successfully then gets deleted

After setting off a new Controller Build you see the OVF Template Deploy and start up. After about 10 minutes, If you launch the VM console you will see that the Controller is being configured with the right IP Pool settings and reboots in a ready state. Without warning the VM is shutdown and deleted.

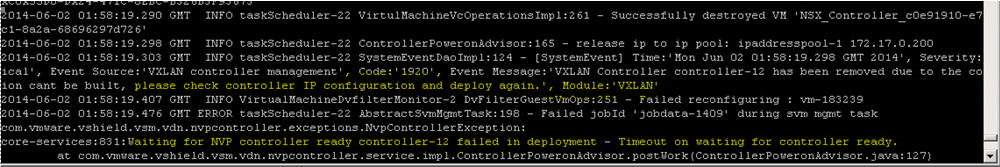

Checking through the Manager Logs you see this entry relating to the destruction of the Controller VM

Checking through the Manager Logs you see this entry relating to the destruction of the Controller VM

This one is actually pretty easy, and was a user error on my part. The logs clearly state that there was a timeout waiting for the controller to be ready. This was due to the wrong Connected To Network being selected during the Add Controller phase. This network must be able to contact the NSX Manager and vSphere Components…again an obvious error once you view the logs…but initially it just appeared like vCenter via the NSX Service account was deleting the VM for the hell of it.

Note: For more detailed install guides check our Chris Wahl’s Post (http://wahlnetwork.com/2014/04/28/working-nsx-deploying-nsx-manager/) and Anthony Burke’s Post (http://networkinferno.net/installing-vmware-nsx-part-1) to get up to speed on initial NSX Management, Controller and Transport Prep.

This one is actually pretty easy, and was a user error on my part. The logs clearly state that there was a timeout waiting for the controller to be ready. This was due to the wrong Connected To Network being selected during the Add Controller phase. This network must be able to contact the NSX Manager and vSphere Components…again an obvious error once you view the logs…but initially it just appeared like vCenter via the NSX Service account was deleting the VM for the hell of it.

Note: For more detailed install guides check our Chris Wahl’s Post (http://wahlnetwork.com/2014/04/28/working-nsx-deploying-nsx-manager/) and Anthony Burke’s Post (http://networkinferno.net/installing-vmware-nsx-part-1) to get up to speed on initial NSX Management, Controller and Transport Prep.

6 Commentsarchived