Opinion: VMware and NVIDIA Step Up on Private AI for Enterprises

It’s not a surprise that Generative AI featured heavily as a topic at VMware Explore US last week in Vegas. 2023 is the year that most large tech companies jump on this movement/bandwagon and try to ride it all the way through to the top of the hype curve and then down the other side. So, here are some thoughts on what was announced last week between NVIDIA and VMware.

A Strategic Move in the AI Space

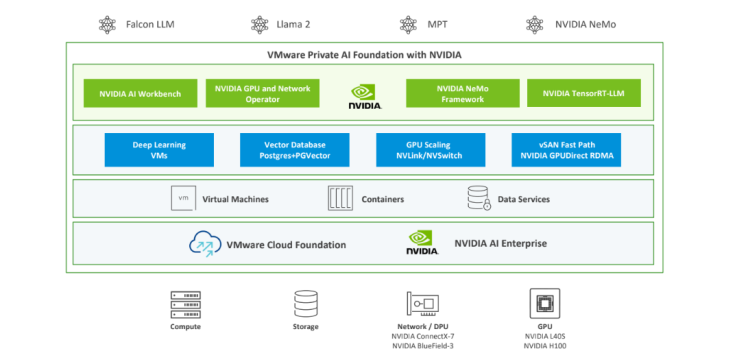

Building on an existing partnership, VMware and NVIDIA have announced the VMware Private AI Foundation at the recent VMware Explore 2023 event. This isn’t merely a product launch, for me sitting in the audience and hearing Jensen talk about the partnership, it made sense to me and marks a significant advancement in AI for enterprise applications powered by VMware Virtualization.. The platform is optimized for running generative AI applications like chatbots, assistants, and search, to name a few.

What It Offers

The Private AI Foundation will feature NVIDIA’s NeMo framework, integrated within the VMware Cloud Foundation, to create a comprehensive, cloud-native AI solution. This enables enterprise data to stay private while also benefiting from the generative capabilities of AI, minimizing both IP risk and compliance issues.

The Need for a Private AI Solution

The move to offer a private AI solution comes amid increased enterprise concerns regarding data privacy and intellectual property risks. The Private AI Foundation will allow businesses to run AI services wherever their data resides, ensuring a secure, private environment for AI deployment. This has been one of the biggest concerns and questions in the Generative AI space since OpenAI burst onto the scenes late in 2022.

What Sets It Apart

Apart from tapping into the potential existing customer base of VMware vSphere customers and partners, this new platform is being touted with…

- Performance: The platform promises performance equal to or even exceeding bare metal in certain cases, according to industry benchmarks.

- Choice: Offering a wide array of choices for building and running models—everything from NVIDIA NeMo to Llama 2 and more ensures flexibility in model development.

- Scale: The platform supports GPU scaling in virtualized environments for up to 16 vGPUs/GPUs in a single virtual machine. This allows for quicker fine-tuning and deployment of generative AI models.

- Cost: Optimizations across CPUs, GPUs, and DPUs are expected to lower the overall costs, ensuring resource efficiency.

Third-Party Support

The platform is expected to garner support from leading tech companies like Dell Technologies, Hewlett Packard Enterprise, and Lenovo, further cementing its place in the enterprise AI landscape which will look to cash in on server and storage and networking based hardware sales to compliment the NVIDA hardware in this solution.

Conclusion

VMware and NVIDIA are trying to position themselves at the forefront of enterprise AI solutions. Again, I am buyout and impressed with this collaboration as it promises to equip businesses across sectors with the tools they need to harness the potential of generative AI effectively and securely and more importantly within their own sense of controls. With a slated release in early 2024, the Private AI Foundation will be an interesting test of how committed VMware’s customers are to the datacenter.