Prompt Engineering - Custom GPTs Are Changing the Way We Interact with Technology

Back in November 2023, OpenAI introduced custom versions of ChatGPT that combine instructions, extra knowledge, and any combination of skills that can be created by ChatGPT users… the idea was essentially an extension of what you could do with ChatGPT and it’s plugin library (which is very very hit and miss) and also be able to tap into external sources for up to date data processing.

We’re rolling out custom versions of ChatGPT that you can create for a specific purpose—called GPTs. GPTs are a new way for anyone to create a tailored version of ChatGPT to be more helpful in their daily life, at specific tasks, at work, or at home—and then share that creation with others. For example, GPTs can help you learn the rules to any board game, help teach your kids math, or design stickers. Anyone can easily build their own GPT—no coding is required. You can make them for yourself, just for your company’s internal use, or for everyone. Creating one is as easy as starting a conversation, giving it instructions and extra knowledge, and picking what it can do, like searching the web, making images or analyzing data.

The idea is that a marketplace could be created full of custom GPTs made by the community and then be somewhat monitized… Remember with Sam Altman was briefly fired from being CEO and on the board of OpenAI and then as quickly as that happened he was reinstated? Minus the Microsoft powerplay that was occurring, if you believe what you read at the time, the GPT Store and the monetization of that was central to that drama. That aside, Custom GPTs can be a great way to dabble into the power of Generative AI and also from the point of view of Prompt Engineering, start to understand the power that we all have more readily at our fingertips. The fact that OpenAI has this as a public model which is effectively an as a Service LLM on a super enhanced scale.

A Working Example with the Veeam Service Provider Console APIs.

You will need a ChatGPT Plus Subscription in order to create Custom GPTs and for more information you can check this post out: https://openai.com/blog/introducing-gpts

A quick note before I dive into this example. It’s obviously not totally best practice to have your API endpoints exposed on the internet directly, however for the purpose of this demo and given there is some form of token based authentication being used that needs to be generated and entered, I would hope that all the security people hold their pitchforks at bay. That said, there are services like NGROK that can securely expose an API endpoint and in fact I should have used this for this example, however I’ve had this particular endpoint for Veeam Service Provider Console exposed for a number of years now for demo purposes.

Working with the API:

In order to have ChatGTP interact with the API endpoint we need to define and create an OpenAPI Schema definition of the endpoint(s) we want to interact with. The first step is to create a new GPT from the ChatGPT menu. Once that’s done you can enter in some basic information and then head down the the Actions.

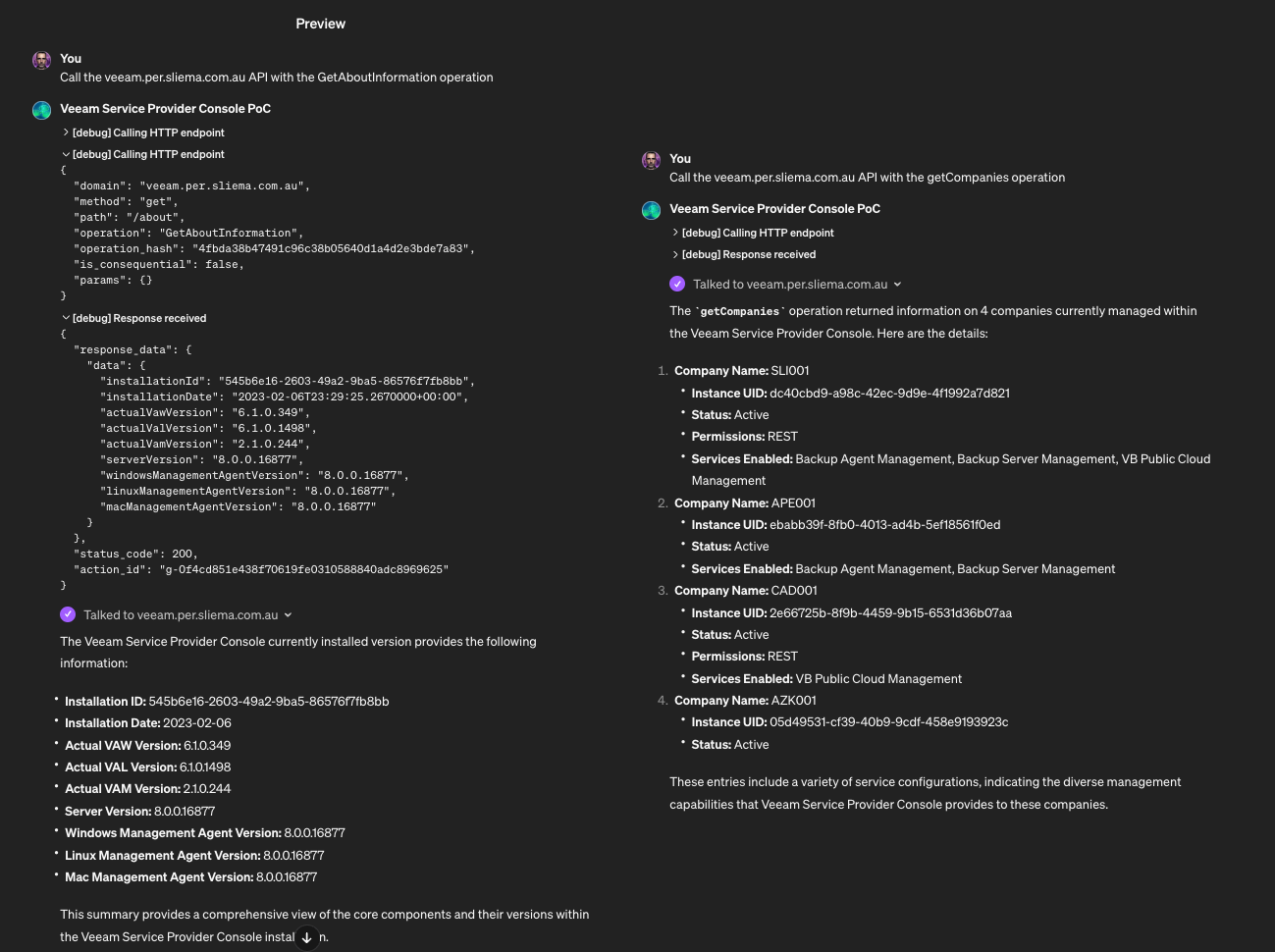

Here in the Edit Actions section (image below) is where are configure the endpoints to talk to. In this case i’m hitting the API i’ve exposed at https://veeam.per.sliema.com.au/api/v3 and for the purpose of this quick example, configuring two actions. Those Actions are described in the Schema which is based on the OpenAPI 3.1 framework. In essence what we are doing here is taking an API Specification and porting it across to a new specification that ChatGPT can interact with. In order to do this, you need to have access to the remote specification JSON file, or have access to a Swagger like interface to build out the OpenAI Schema.

There are some examples that are included so you can get a feel as to how to build the custom spec out, and you can also import from a URL (though I couldn’t get this working due to the Service Provider Schema being too big). For Authentication, i’m just using an API Key that I generate from a CURL command using basic authentication that generates the access token which needs to be reentered and saved every time as the token will expire after some time as dictated by the API endpoint security settings. This is the one part that is manual for this PoC example, however there are advanced Authentication Types that can be configured such as oAuth.

There are some examples that are included so you can get a feel as to how to build the custom spec out, and you can also import from a URL (though I couldn’t get this working due to the Service Provider Schema being too big). For Authentication, i’m just using an API Key that I generate from a CURL command using basic authentication that generates the access token which needs to be reentered and saved every time as the token will expire after some time as dictated by the API endpoint security settings. This is the one part that is manual for this PoC example, however there are advanced Authentication Types that can be configured such as oAuth.

Once that’s done we are ready to test out the Actions as defined in the Schema. In the right hand pane, you can preview the results of the actions or troubleshoot any authentication or schema errors. Note that, depending on the action, running a Test runs a simulation but as you can see below, we are getting information from the API as expected.

Once that’s done we are ready to test out the Actions as defined in the Schema. In the right hand pane, you can preview the results of the actions or troubleshoot any authentication or schema errors. Note that, depending on the action, running a Test runs a simulation but as you can see below, we are getting information from the API as expected.

Once we have tested the Schema is working and returning results as expected, the CustomGPT can be saved. At this point you can actually make this public and publishable, but you will need to publish some Privacy Policies for that to happen… if you need help with that… just ask ChatGPT.

Once we have tested the Schema is working and returning results as expected, the CustomGPT can be saved. At this point you can actually make this public and publishable, but you will need to publish some Privacy Policies for that to happen… if you need help with that… just ask ChatGPT.

Talking to your Server as if it was Human:

This is where the world of LLMs and Prompt Engineering comes into it’s own. Being able to interact and interface with a machine in a natural way is what Generative AI allows. You also can expand on the information and data that is returned via the configured schema and turn it into more meaningful actions. Below is a short video showing the interactions with the endpoints that we have configured via the LLM… again, think of the possibilities that open up by being more natural with your platforms as administrators or help desk staff. https://vimeo.com/912505629?share=copy Thinking forward on the above example, a logical next step might be to ask the CustomGPT to prepare the server for an upgrade and actually… maybe… patch/update it. The second example shows possibilities of company/tenant manipulation. Asking for the Company details and what services are enabled… you could break that down and go deeper into one of the customers and looking at more advanced prompting, if configured you could ask the LLM to disable the company or turn on/off services.

Conclusion

As we look to the horizon, the introduction of custom GPTs marks a potential shift in how we engage with technology, moving towards interactions that are not only more natural but more intuitive and efficient. The Proof of Concept of integrating the Veeam Service Provider Console API into ChatGPT gives you a glimpse at potential that lies in conversing with our systems as if they were colleagues rather than just machines. This evolution in AI interaction with existing technology, makes it accessible and adaptable to a broader spectrum of users. The essence of this shift is not just in automation or data processing or more efficient platform operations, but possibly creating a connection that feels genuinely human.

As we continue to refine and expand these capabilities and get to understand how to better work with them, the potential for innovation is limitless, promising a future where technology becomes less complex, more efficient and more user friendly…though as with all discussions around AI, it’s a world where there are still a lot of unknowns around security and trust.

At the end of the day, can we trust this sort of interface with complex, critical tasks?

References: https://chat.openai.com/gpts/editor

References: https://chat.openai.com/gpts/editor