NSX Bytes: NSX-v 6.3 Host Preparation Fails with Agent VIB module not installed

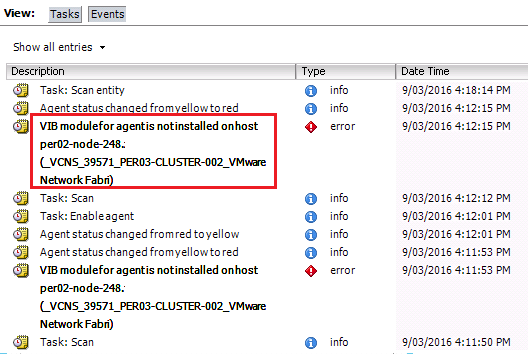

NSX-v 6.3 was (http://pubs.vmware.com/Release_Notes/en/nsx/6.3.0/releasenotes_nsx_vsphere_630.html) with an impressive list of new enhancements and I wasted no time in looking to upgrades my NestedESXi lab instance from 6.2.5 to 6.3 however I ran into an issue that at first I thought was related to a (http://anthonyspiteri.net/nsx-bytes-host-preparation-doesnt-complete/) caused by VMware Update Manager not being available during NSX Host upgrades…in this case it presented with the same error message in the vCenter Events view:

VIB module for agent is not installed on host  After ensuring that my Update Manager was in a good state I was left scratching my head…that was until some back and forth in the vExpert Slack #NSX channel relating to a new VMwareKB that was released the same day as NSX-v 6.3.

https://kb.vmware.com/kb/2053782

After ensuring that my Update Manager was in a good state I was left scratching my head…that was until some back and forth in the vExpert Slack #NSX channel relating to a new VMwareKB that was released the same day as NSX-v 6.3.

https://kb.vmware.com/kb/2053782

This issue occurs if vSphere Update Manager (VUM) is unavailable. EAM depends on VUM to approve the installation or uninstallation of VIBs to and from the ESXi host.

Even though my Upgrade Manager was available I was not able to upgrade through Host Preparation. It seem’s like vSphere 6.x instances might be impacted by this bug but the good news is there is a relatively easy workaround as mentioned in the VMwareKB that bypasses the VUM install mechanism. To enable the workaround you need to enter into the Managed Object Browser of the vCenter EAM by going to the following URL and entering in vCenter admin credentials.

https://vCenter\_Server\_IP/eam/mob/

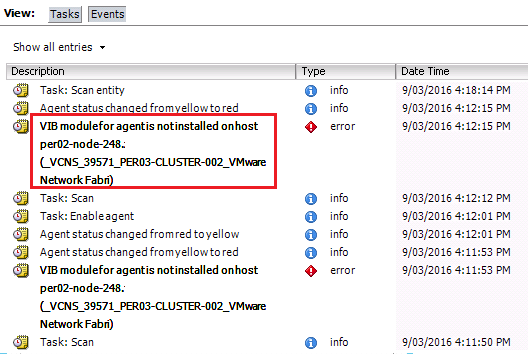

Once logged in you are presented with a (or list of) agencies. In my case I had more than one, but I selected the first one in the list which was agency-11

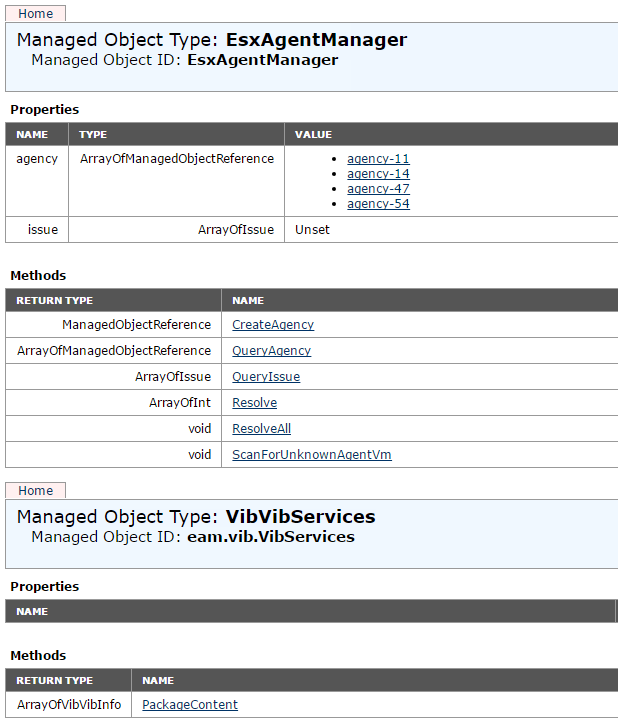

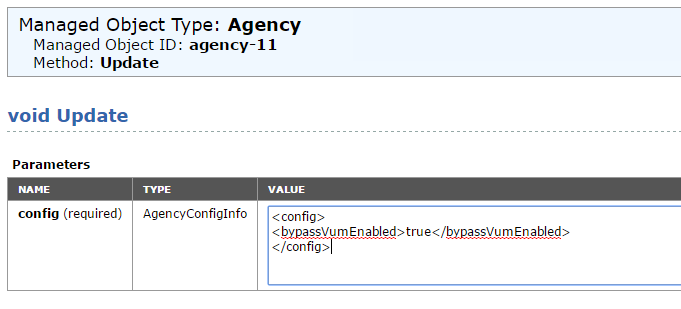

The value that needs to be changed is the bypassVumEnabled boolean value as shown below.

The value that needs to be changed is the bypassVumEnabled boolean value as shown below.

To set that flag to True enter in the following URL:

https://vCenter\_Server\_IP/eam/mob/?moid=agency-x&method=Update

Making sure that the agency number matches your vCenter EAM instance. From there you need to change the existing configuration for that value by removing all the text in the value box and invoking the value listed below:

To set that flag to True enter in the following URL:

https://vCenter\_Server\_IP/eam/mob/?moid=agency-x&method=Update

Making sure that the agency number matches your vCenter EAM instance. From there you need to change the existing configuration for that value by removing all the text in the value box and invoking the value listed below:

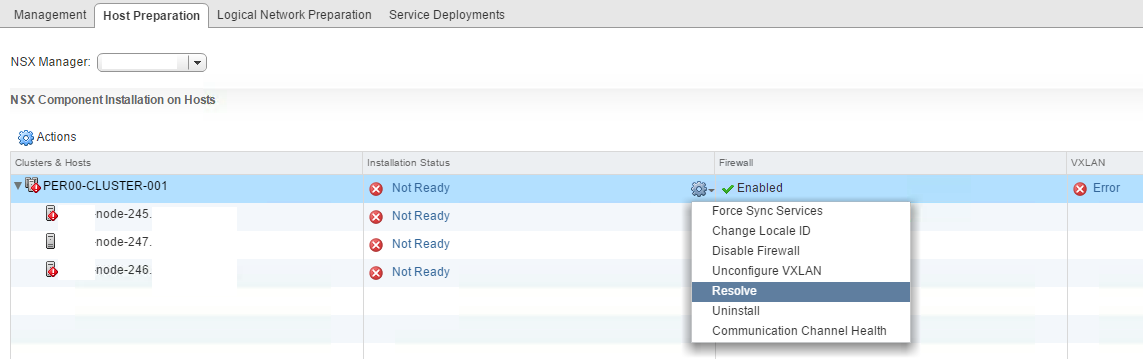

Once invoked you should be able to go back into the Web Client and click on Resolve under the Cluster name in the Host Preparation Tab of the NSX Installation window.

Once invoked you should be able to go back into the Web Client and click on Resolve under the Cluster name in the Host Preparation Tab of the NSX Installation window.

Once done I was in an all Green state and all hosts where upgraded to 6.3.0.5007049. Once all hosts have been upgraded it might be a useful idea to reverse the workaround and wait for an official fix from VMware.

References:

https://kb.vmware.com/kb/2053782

Once done I was in an all Green state and all hosts where upgraded to 6.3.0.5007049. Once all hosts have been upgraded it might be a useful idea to reverse the workaround and wait for an official fix from VMware.

References:

https://kb.vmware.com/kb/2053782

2 Commentsarchived