Nested ESXi - Reduced Network Throughput with Promiscuous Mode PortGroups

We have been conducting performance and stress testing of a new NFS connected storage platform over the past month or so and through that testing we have seen some interesting behaviours in terms of what effects overall performance relative to total network throughput vs Latency vs IOPS. Using a couple of stress test scripts, we have been able to bottleneck the performance at the network layer…that is to say, all conditions being equal we can max out a 10Gig Uplink across a couple hosts resulting in throughput of 2.2GB/s or ~20,000Mbits/s on the switch. Effectively the backend storage (at this stage) is only limited by the network.

!(/images/2013/11/promis_3.png)

To perform some “real world” testing I deployed a Nested ESXi Lab comprising of our vCloud Platform into the environment. For the Nested ESXi Hosts (ESXi 5.0 Update 2) networking I backed 2x vNICs with a Distributed Switch PortGroup configured as a Trunk accepting all VLANs and Accepting Promiscuous Mode traffic.

!(/images/2013/11/promis_0.png)

!(/images/2013/11/promis_4_1.png)

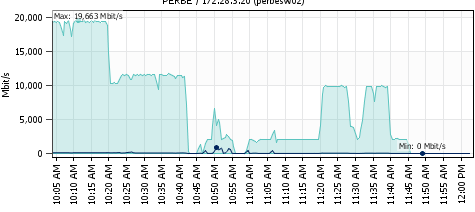

While continuing with the load testing in the parent environment (not within the nested ESXi) we started to see throughput issues while running the test scripts. Where we had been getting 1-1.2GB/s of throughput per host, all of a sudden that had dropped to 200-300MB/s. Nothing else had changed on the switching so we where left scratching our heads.

!(/images/2013/11/promis_2.png)

From the above performance graph, you can see that performance was 1/5 of what we had seen previously and it looked like there was some invisible rate limiting going on. After accusing the network guy (@sjdix0n) I started to switch off VMs to see if there was any change to the throughput. As soon as all VMs where switched off, throughput returned to the expected Uplink saturation levels. As soon as I powered on one of the nested ESXi hosts performance dropped from 1.2GB/s to about 450MB/s. As soon as I turned on the other nested host it dropped to about 250MB/s. In reverse you can see that performance stepped back up to 1.2GB/s with both nested hosts offline. So…what’s going on here? The assumption we made was that Promiscuous Mode was causing saturation within the Distributed vSwitch causing the performance test to record the much lower values and not be able to send out traffic at expected speeds.

!(/images/2013/11/promis_1.png)

Looking at one of the nested hosts Network Performance graphs you can see that it was pushing traffic on both vNICs roughly equal to the total throughput of that the test was generating…the periods of non activity above was when we where starting and stopping the load tests. In a very broad sense we understand how and why this is happening, but it would be handy to get an explanation as the the specifics of why having a setup like this can have such a huge effect on overall performance. UPDATE WITH EXPLANATION: After posting this initially I reached out to William Lam (@lamw) via Twitter and he was kind enough to post an article explaining why Promiscuous Mode and Forged Transmits are required in Nested ESXi environments. http://www.virtuallyghetto.com/2013/11/why-is-promiscuous-mode-forged.html Word to the wise about having Nested Hosts…it can impact your environment in ways you may not expect! Is it any wonder why nested hosts are not officially supported?? :) // //

1 Commentarchived