Quick Fix: vSphere with Tanzu - No Default StorageClass set

Over the past few weeks I’ve posted a couple of articles that should help people new to (https://anthonyspiteri.net/deploying-vmware-tanzu-with-ha-proxy/). There are some common hurdles that can pop up. I’ve now deployed it in a couple of environments and each one has raised new quirks. There also isn’t a lot of straight forward content out there on how to quickly fix issues that come up after the deployment of a Tanzu Kubernetes Grid instance into a fresh namespace. There are kubernetes security and kubernetes storage volumes to deal with and for those not familiar with or new to Kubernetes… it can drive you mad!

The Problem:

As mentioned in my first (https://anthonyspiteri.net/no-persistent-volumes-available-claim-storage-class/), after seeing that there was no Default StorageClass set in the TKG cluster, I attempted to patch a StorageClass to make it default. However, what I found was that a short time after patching the Storage Class to Default, the IsDefaultClass flag would set back to No.

About the Kubernetes Default Storage ClassA StorageClass provides a way for administrators to describe the “classes” of storage they offer. Different classes might map to quality-of-service levels, or to backup policies, or to arbitrary policies determined by the cluster administrators. Kubernetes itself is un-opinionated about what classes represent. This concept is sometimes called “profiles” in other storage systems. Depending on the installation method, your Kubernetes cluster may be deployed with an existing StorageClass that is marked as default. This default StorageClass is then used to dynamically provision storage for PersistentVolumeClaims that do not require any specific storage class

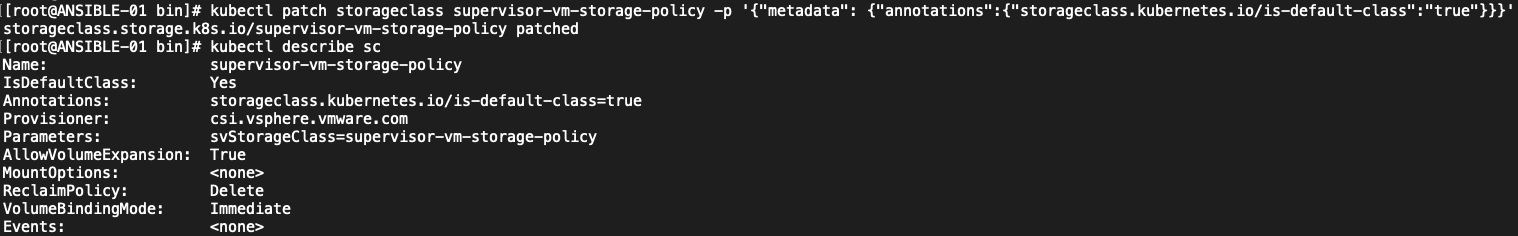

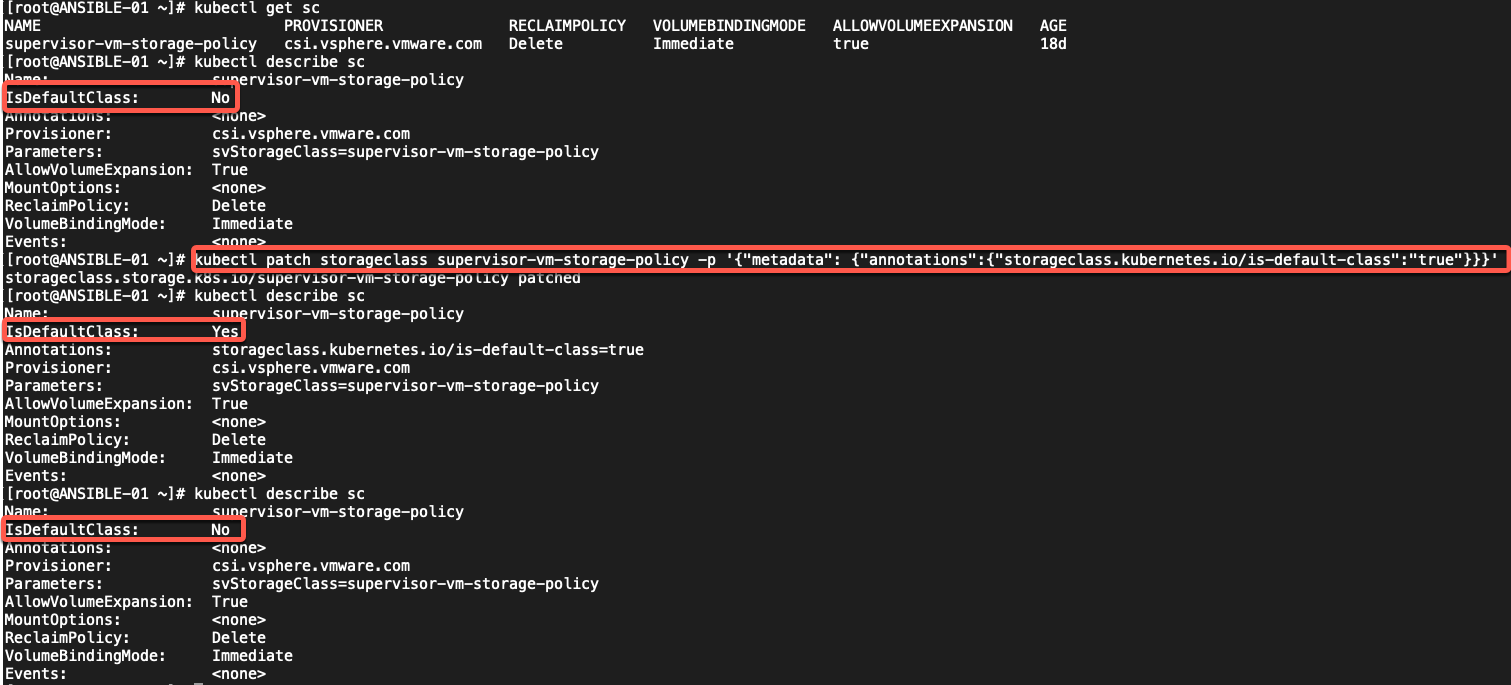

In talking with Myles Grey about this, it seemed to be that there was some self healing operation going on by the SuperVisor cluster to ensure that the TKG Cluster was in the correct state as per the deployment specifications. Doing a little more digging there is a way to patch/edit that TKG config and have it set a Default StorageClass as well as what needs to be done at the time of deployment to have it be set from the outset. It was pretty clear to see this self healing in play:

# kubectl get sc

NAME PROVISIONER RECLAIMPOLICY VOLUMEBINDINGMODE ALLOWVOLUMEEXPANSION AGE

supervisor-vm-storage-policy csi.vsphere.vmware.com Delete Immediate true 20d# kubectl describe sc

Name: supervisor-vm-storage-policy

IsDefaultClass: No

Annotations: cns.vmware.com/StoragePoolTypeHint=cns.vmware.com/NFS

Provisioner: csi.vsphere.vmware.com

Parameters: storagePolicyID=ba7e7cf3-9ac3-4a18-ae6e-f0f2d3f0ccf4

AllowVolumeExpansion: True

MountOptions: none

ReclaimPolicy: Delete

VolumeBindingMode: Immediate

Events: noneWhile in the TKG Cluster, using a kubectl patch operation, we can set the Storage Class to be default.

# kubectl patch storageclass supervisor-vm-storage-policy -p '{"metadata": {"annotations":{"storageclass.kubernetes.io/is-default-class":"true"}}}' However this would revert back after about 60 seconds. As mentioned in my first post, there are ways to get around this when dealing with Helm Charts, but we want this to be set default and stick.

However this would revert back after about 60 seconds. As mentioned in my first post, there are ways to get around this when dealing with Helm Charts, but we want this to be set default and stick.

The Fix:

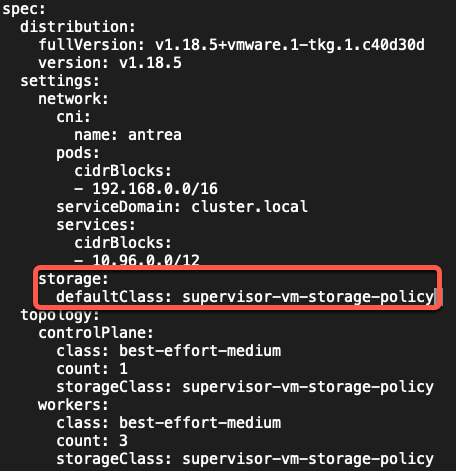

As a SuperVisor administrator, log into the SuperVisor namespace and se the context to the TKG Cluster as required. From here we need to edit the TKG Cluster by running:

# kubectl edit tanzukubernetescluster tkg-cluster-001And adding in a couple of lines as shown below in the settings: section of the yaml configuration.

This same entry is what is required in the original yaml configuration that defines the TKG Cluster before being initially created. When I created this cluster initially, it did not have this specified and therefore was not defined at the SuperVisor layer.

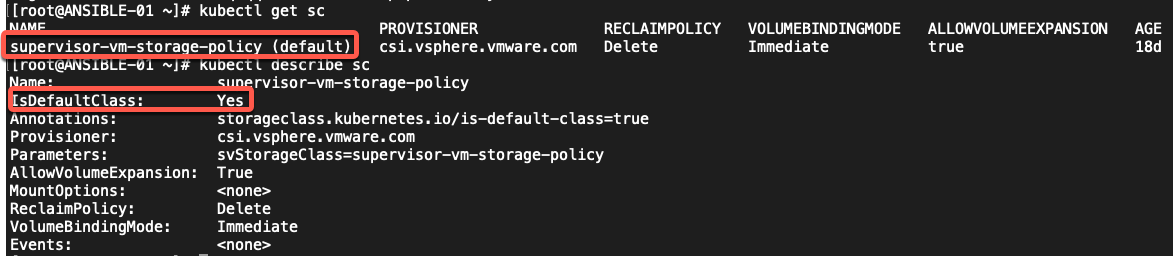

Once that has been done, when I logged back into the TKG Cluster NameSpace and viewed the StorageClass settings, now we can see the (default) distinction next to the StorageClass and IsDefaultClass is stuck to Yes.

This same entry is what is required in the original yaml configuration that defines the TKG Cluster before being initially created. When I created this cluster initially, it did not have this specified and therefore was not defined at the SuperVisor layer.

Once that has been done, when I logged back into the TKG Cluster NameSpace and viewed the StorageClass settings, now we can see the (default) distinction next to the StorageClass and IsDefaultClass is stuck to Yes.

References:

https://kubernetes.io/docs/tasks/administer-cluster/change-default-storage-class/

pod s kubernetes deployment kubernetes persistent volumes security policy default storageclass

References:

https://kubernetes.io/docs/tasks/administer-cluster/change-default-storage-class/

pod s kubernetes deployment kubernetes persistent volumes security policy default storageclass

1 Commentarchived