ROOK – Ceph Backed Object Storage for Kubernetes Install and Configure

A couple of weeks ago I wrote a quick post about Longhorn as a storage provider for my Kubernetes labs. While that has worked pretty well, I thought I would look at some other alternatives and see how they compare. I have seen Rook-Ceph referenced and used before, but I never looked at installing it until this week. Rook enables Ceph storage systems to run on Kubernetes using Kubernetes primitives. Ceph is something that I have dabbled with since its early days, but due to some initial bad experiences at my previous company I have tended to stay away from it. That said, I had noticed that it has become a strong choice in the service provider world for those looking to offer their own self managed Object Storage platforms, and a number of companies leverage Ceph in the backend as part of their commercial Object Storage platform offerings.

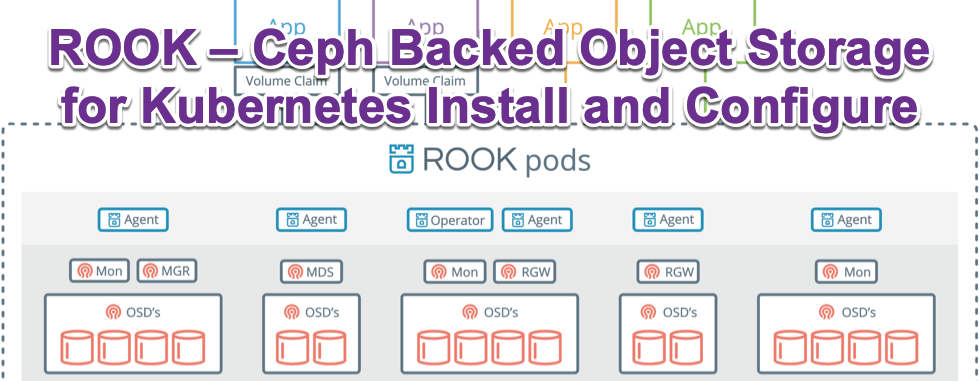

Rook turns distributed storage systems into self-managing, self-scaling, self-healing storage services. It automates the tasks of a storage administrator: deployment, bootstrapping, configuration, provisioning, scaling, upgrading, migration, disaster recovery, monitoring, and resource management. Rook uses the power of the Kubernetes platform to deliver its services via a Kubernetes Operator for each storage provider.

Rook is shaped as a storage operator for Kubernetes. It deploys Ceph and runs it in a Kubernetes cluster. From there, Kubernetes applications leverage block volumes managed by Rook. The Rook operator automates configuration of storage components and monitors the cluster to ensure the storage remains available and healthy backed by the Rook Kubernetes StorageClass The great thing about it as well is that is comes ready made with a CSI Driver, meaning volume snapshots can take place.. this is core to running stateless application and being able to back them up. Rook officially supports v1/v1beta1 snapshots for kubernetes v1.17+ with the Install of the snapshot controller and snapshot v1/v1beta1 CRD and allows applications to interact with its VolumeSnapshotClass.

Just like StorageClass provides a way for administrators to describe the “classes” of storage they offer when provisioning a volume, VolumeSnapshotClass provides a way to describe the “classes” of storage when provisioning a volume snapshot.

Installation and Initial Configuration

I go through the install (in full and end to end) using kubectl in the YouTube video below. It is a straight forward install, but there some things that need to be done to get the Ceph Dashboard exposed via a Kubernetes load balancer and I also go through setting up the Kubernetes Snapshot configuration with Rook’s RBD CSI Driver, as well as testing it all out with Kubstr from Kasten. https://youtu.be/cR-s26Zzx4Y A full end to end cli walkthrough is played below with all steps able to be copied from the embedded player.

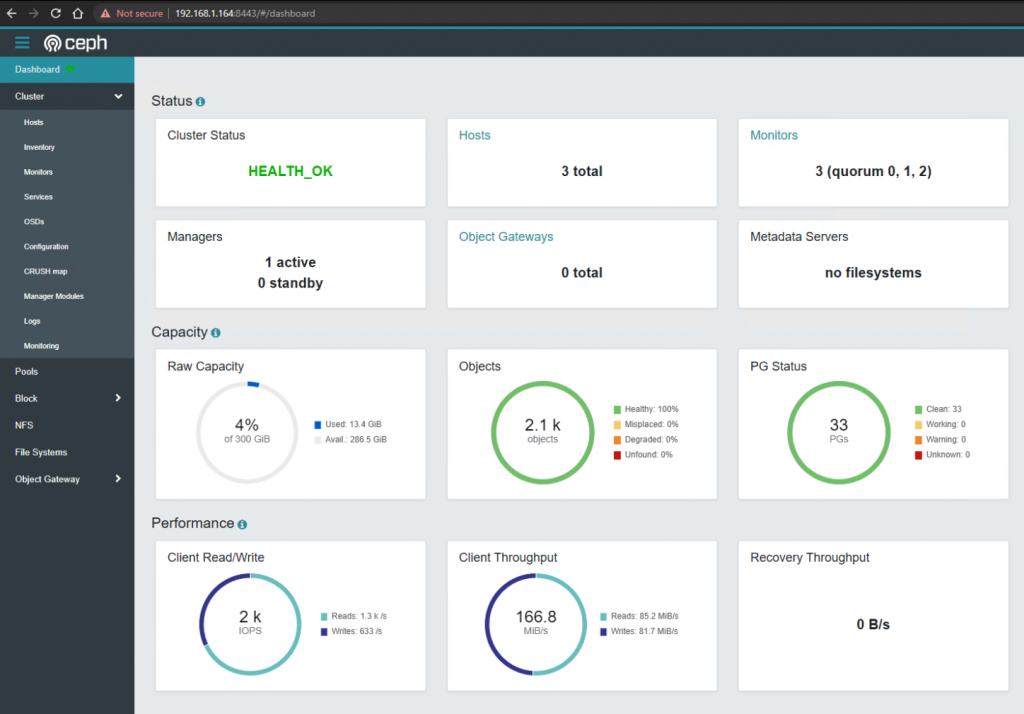

Ceph Dashboard

The Ceph Dashboard is installed as part of the setup, but needs to be exposed. In the setup capture above, I go through how to expose with to a LoadBalancer IP after Rook has been deployed.

CSI Plugin

The Kubernetes CSI plugin calls Longhorn to create volumes to create persistent data for a Kubernetes workloads. The CSI plugin gives you the ability to attach, create, delete, attach, detach, mount the volume, and take snapshots of the volume. The Kubernetes cluster internally uses the CSI interface to communicate with the Rook RBD CSI plugin which then communicates with the Ceph. More information on that can be found (https://rook.io/docs/rook/v1.7/ceph-csi-snapshot.html): References https://rook.io/docs/rook/v1.7/quickstart.html (https://rook.io/docs/rook/v1.7/ceph-csi-snapshot.html)

2 Commentsarchived