VMware Labs: Top 5 Flings

For those that are not aware, VMware has had their Lab Flings going for a number of years now and on the back of the latest release ( ESXi Embedded Host Clie...

Tag

2 posts

For those that are not aware, VMware has had their Lab Flings going for a number of years now and on the back of the latest release ( ESXi Embedded Host Clie...

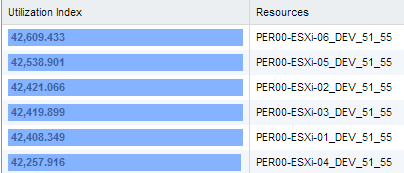

Late last year I was load testing against a new storage platform using both physical and nested ESXi hosts...at the time I noticed decreased network throughp...