Automated Configuration of Backup & Replication with PowerShell

As part of the Veeam Automation and Orchestration for vSphere project myself and Michael Cade worked on for VMworld 2018 , we combined a number of seperate p...

Tag

6 posts

As part of the Veeam Automation and Orchestration for vSphere project myself and Michael Cade worked on for VMworld 2018 , we combined a number of seperate p...

Last week I attended the Sydney and Melbourne VMUG UserCons and apart from sitting in on some great sessions I came away from both events with a renewed sens...

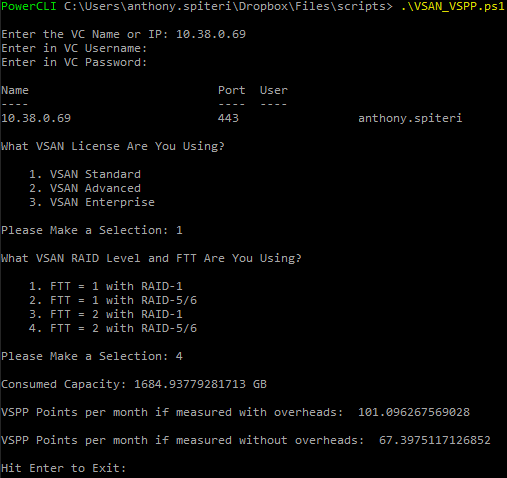

There is no doubt that new pricing introduced to vCAN Service Providers announced just after VSAN 6.2 was released meant that Service Providers looking at VS...

Over the past couple of weeks i've been helping our Ops Team decommission an old storage array. Part of the process is to remove the datastore mounts and pat...

We have recently been working through a product where knowing and reporting on VM Max Read/Write IOPS was critical. We needed a way to be able to provide rep...

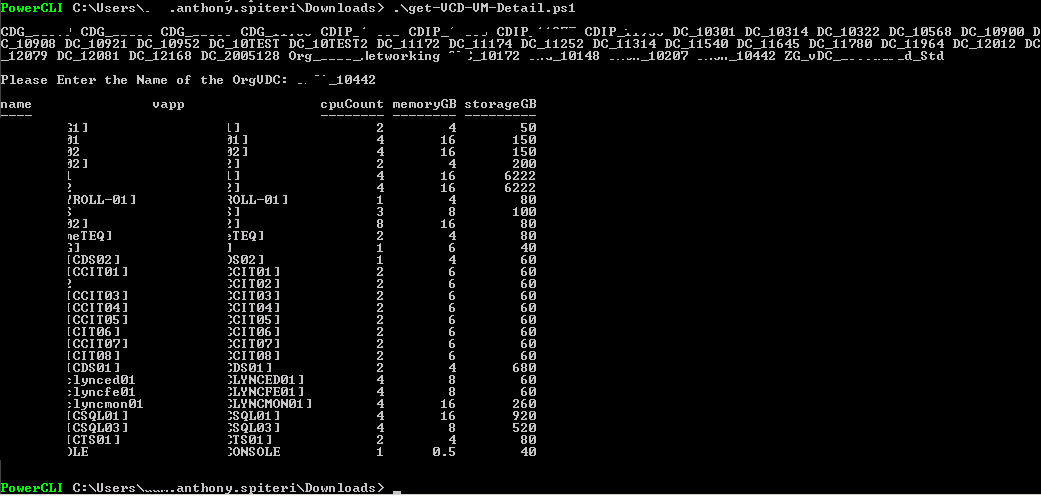

I had been looking for a way to get quick reports from our vCloud Zones using PowerCLI that reports on VM Allocated Usage. Basically I wanted to get a list o...