Install and Configure oVirt for KVM Homelab (CLI)

At VeeamON, we announced that Veeam would soon (https://anthonyspiteri.net/veeam-backup-for-rhv/). In a subsequent post, I wrote down some of the observations I had seen over the past few months talking to customers and partners who have chosen to go down the KVM/Red Hat Virtualisation path also outlining some of the improvements with the a quickly maturing KVM virtualisation stack. At the centre of that stack is HostedEngine, which powers oVIRT. This is what is driving the uptick in KVM interest. At the time, I had got working a homelab single host instance of KVM with oVirt up and running for some labbing, but is was a very painful experience to say the least. With that, I wanted to revisit the install and document the steps taken to get to a working NestedESXi lab environment. In Part 1, I covered the deployment and configuration of a single host KVM server… and in part 2, I covered the Hosted Engine install that runs oVirt as done through Cockpit.

I wanted to quickly follow that up by showing the command line way to achieve the oVirt Engine deployment and configuration.

Installing with hosted-engine Command:

The oVirt Engine runs as a virtual machine on self-hosted engine nodes (specialized hosts) in the same environment it manages. A self-hosted engine environment requires one less physical server, but requires more administrative overhead to deploy and manage. The Engine is highly available without external HA management.

The minimum setup of a self-hosted engine environment includes one oVirt Engine virtual machine that is hosted on the self-hosted engine nodes. The Engine Appliance is used to automate the installation of an Enterprise Linux 8 virtual machine, and the Engine on that virtual machine. The self-hosted engine installation uses Ansible and the Engine Appliance (a pre-configured Engine virtual machine image) to automate the installation tasks. End to end, this took about 30-40 minutes on my home setup with the VMs living on high speed NVMe datastores. There are a couple of ways to install and configure oVIRT with the Hosted Engine setup. The example below shows how to do it through Cockpit.

Before the oVIRT setup can begin, a few things need to be configured and installed from the KVM Host cli.

# dnf -y install https://resources.ovirt.org/pub/yum-repo/ovirt-release44.rpm

# yum module -y enable javapackages-tools

# yum module -y enable pki-deps

# yum module -y enable postgresql:12

# yum -y update

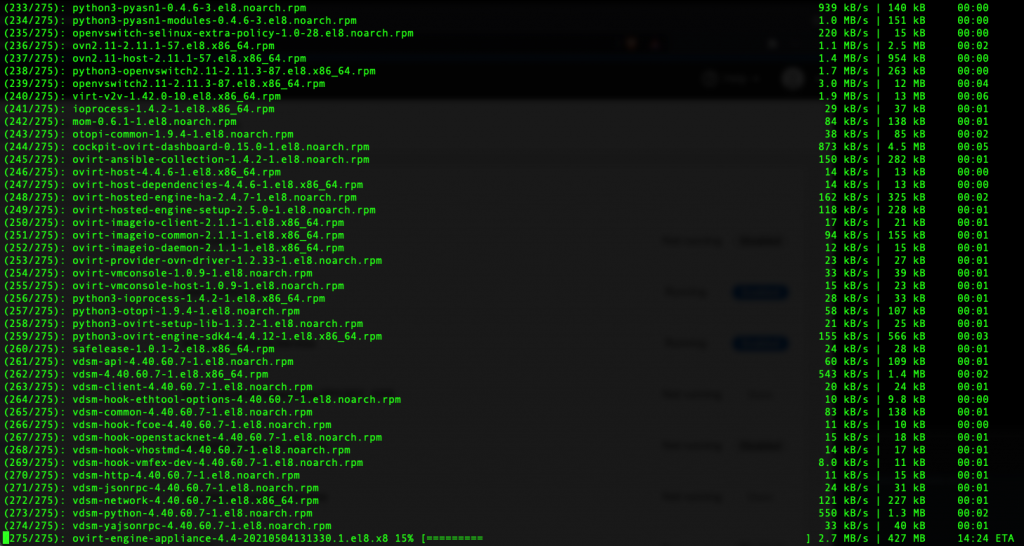

# dnf -y install cockpit cockpit-ovirt-dashboard gluster-ansible-roles ovirt-engine-applianceThe commands above will add the oVirt rpm to the system, enable a few required packages and tools as well as PostGreSQL.

From here, for a self contained KVM host, we can run the hosted-engine —deploy command to trigger the initial configuration steps.

Compared to the CockPit HostedEngine configuration process, this to me is a little more streamlined. Lots of the steps are default options, but the same settings need to be entered. Outside of the [Default Options] Below is a list of what I needed to configure as part of the CLI deployment.

From here, for a self contained KVM host, we can run the hosted-engine —deploy command to trigger the initial configuration steps.

Compared to the CockPit HostedEngine configuration process, this to me is a little more streamlined. Lots of the steps are default options, but the same settings need to be entered. Outside of the [Default Options] Below is a list of what I needed to configure as part of the CLI deployment.

###

Please specify which way the network connectivity should be checked (ping, dns, tcp, none) : none

Please provide the FQDN you would like to use for the engine.

Note: This will be the FQDN of the engine VM you are now going to launch,

it should not point to the base host or to any other existing machine.

Engine VM FQDN: []: ovirt-engine3.sliema.lab

Enter root password that will be used for the engine appliance: ******

Confirm appliance root password: ******

How should the engine VM network be configured? (DHCP, Static)[DHCP]: Static

Please enter the IP address to be used for the engine VM []: 192.168.1.56

Enter engine admin password: *******

Confirm engine admin password: ******

Please specify the storage you would like to use (glusterfs, iscsi, fc, nfs):

Please specify the full shared storage connection path to use (example: host:/path): 192.168.1.50:/nfs/exports/ovirt/dataSo, for the CLI config, there is a total of eight (8) items to enter! Much more steamlined! Below is the full (unedited) end to end install as performed on my Homelab running the VM on a top of the line NVMe. You can see that it still took 40 minutes to complete the process… but if you skip through, you get a good look at what is happening with the Ansible Playbook orchestrating the whole thing.

2 Commentsarchived